Sound and hearing

Sound’ is a psychological experience, a perception of a mechanical force. This mechanical force is simple a movement, a vibration, in some media. So, ‘sound’ begins with vibrations. For us, this is almost always vibrations of the air around us; but if we were dolphins or sharks, we’d be discussing vibrations of water. These vibrations require a media (like air, water, metal, etc.), that’s why you can’t have sound in the vacuum of space for instance.

It is interesting to think of the advantages that hearing has over vision - or at least the contexts where hearing is especially useful. Communication is one of the major ones (sound allows for 360 degree communication over long distances, even at night); navigation is another one, again especially at night; objects can be recognized by their characteristic sounds; hearing works around corners and other obstacles that could block your vision; etc. Remember, babies don’t wave when they want your attention.

These vibrations have two aspects that we care about: frequency and amplitude. Amplitude is related to perceived energy, and is felt as loudness; frequency is perceived as pitch. This is easiest to understand in the context of sinusoidal vibrations, which produce so-called ‘pure tones’. Any mixture of frequencies is a complex tone. Here are some examples:

http://www.fearofphysics.com/Sound/sounds.html

this is even more complex (Pretty Lights):

A pure tone is a sinusoidal vibration of air pressure - a single frequency. Most sounds you encounter are mixtures of different frequencies, so complex tones. A complex tone can be thought of as a mixture of pure tones, and this can be shown by a graphing its frequency spectrum.

The shape of this spectrum is captured in another sound quality - related to, but distinct from pitch and loudness - called timbre:

http://sites.sinauer.com/wolfe3e/chap10/timbreF.htm

(even timbre does not capture everything about a sound; attack and decay play a role too).

Audibility spectrum

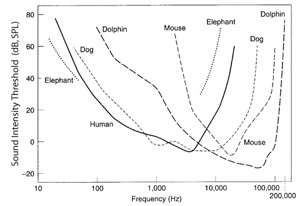

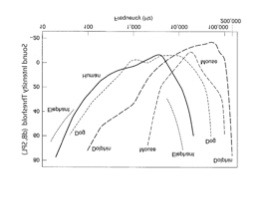

As with our other senses, we are only ‘tuned in’ to a particular range of the available information (just like we are only sensitive to the ROYGBIV ‘visible spectrum’ even though there’s a much broader electromagnetic spectrum out there). Other creatures have different tunings; what you’re tuned in to depends on what’s useful to you in the environment in which you evolved. Here are the frequency sensitivities of some other creatures to put things in perspective:

•bats hear frequencies between 10,000 - 120,000 Hz

•Dogs can detect frequencies between 40 - 50,000 Hz.

•Cats hearing range between 100 and 60,000 Hz

•Mice can hear frequencies between 1,000 and 100,000 Hz.

•Elephant's hearing range between 1 and 20,000 Hz.

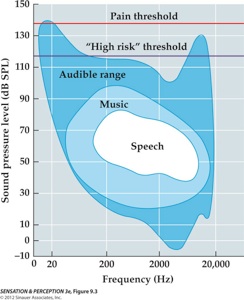

Keep in mind that when measuring the hearing range for a given organism, it matters how intense (‘loud’) the sound was. All the animals above have a peak sensitivity, so they will be best be able to hear sounds within a narrow range. If you want an animal to detect a sound that it is not that sensitive to, that sound had better be very loud. You can measure the maximum frequency range of hearing for an animal by basically seeing if it can detect a particular frequency if you are allowed to make it very intense. For instance, a human will only have a chance of detecting a 20Hz or a 20,000Hz tone if those sounds are very, very intense (high amplitude). However, a human will never be able to hear a 40,000Hz tone no matter how intense it is. You can be breaking all the windows in the laboratory but you won’t hear the tone itself.

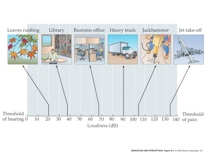

Speaking of intensity:

This site provides some interesting other tidbits about sound and hearing:

http://hypertextbook.com/physics/waves/sound/

Localization

Localization means figuring out where a sound source is located. Ok, fine, you hear a bear at night, but now what? Is it in front of you? to your left? Is it near, far? There are two aspects to localization, the first is determining where the source is, relative to you, and the other is figuring out how far away it is.

To figure out where a sound is, your brain takes advantage of the fact that you have two ears (or, to put it another way, one of the reasons you have two ears in the first place is to help you localize sounds). How the brain uses the two ears to find sound sources is actually pretty much common sense. Think about the following: If you hear a sound, and your auditory system (the parts of your brain devoted to analyzing sound stimuli) notices that it is slightly louder in your left ear than in your right ear, then which side of your body do you think it guesses the sound is coming from? Well, if it is louder in your left ear than your right, then it must be on your left side. This cue is called Interaural Level Difference. (why is it louder in the first place? Because your head blocks some of the sound, creating a sound shadow). You might have also figured out that if a sound is on your left side then those sound vibrations must hit your left ear before they hit your right ear. So, if your auditory system hears a sound, but notices that the signal arrived in your left ear before your right, it will guess (most of the time correctly) that the sound is on your left. That cue is called Interaural Time Difference. Of course, this time difference is small, about one millisecond (1/1000th of a second), but your auditory system is sensitive enough to detect this difference. If a sound signal arrives in your two ears at the same time and with the same intensity, then you would have no idea where the sound is coming from. But you have a way out and that is to rely on the shape of your pinna (the outer pieces of flesh that you usually call your ‘ears’). Since your head and pinna are asymmetric, they make subtle changes to the frequencies in the incoming sounds (the so-called HRTF) that your auditory system can listen for and use to make inferences about sound source location.

29. Sound & Hearing

1:12 PM

Hearing a ‘sound’ begins with vibrations....

1nm; 1/100,000 of a second